We've cooked up a bunch of improvements designed to reduce friction and make the.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

Unordered list

Bold text

Emphasis

Superscript

Subscript

We're excited to announce the launch of the Runpod Assistant: a free, built-in chatbot that lets you manage your pods, endpoints, and infrastructure using plain English. Whether you need to spin up a pod, check GPU availability across data centers, or shut everything down at once, the Assistant puts those actions a conversation away.

The Runpod Assistant is a chatbot available at any time from the upper right corner of the Runpod console. It's knowledgeable about the Runpod ecosystem and AI more generally, and it offers functionality comparable to our REST API, but through natural language instead of code.

Think of it as a conversational interface to your Runpod account. You can ask it questions, give it commands, or use it as a sounding board when you're trying to figure out the right GPU for a workload.

Here are some things you can do with the Assistant right out of the box:

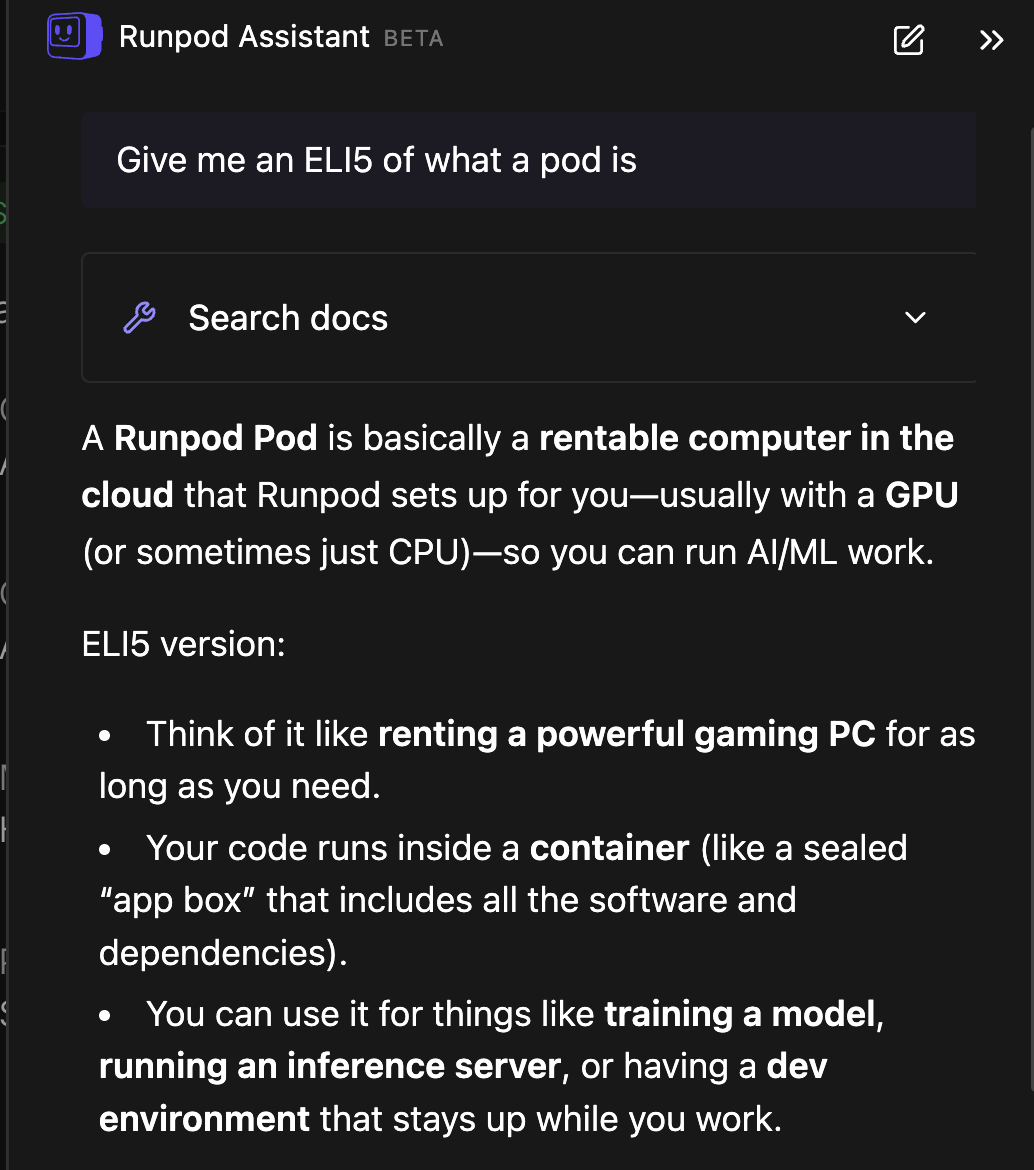

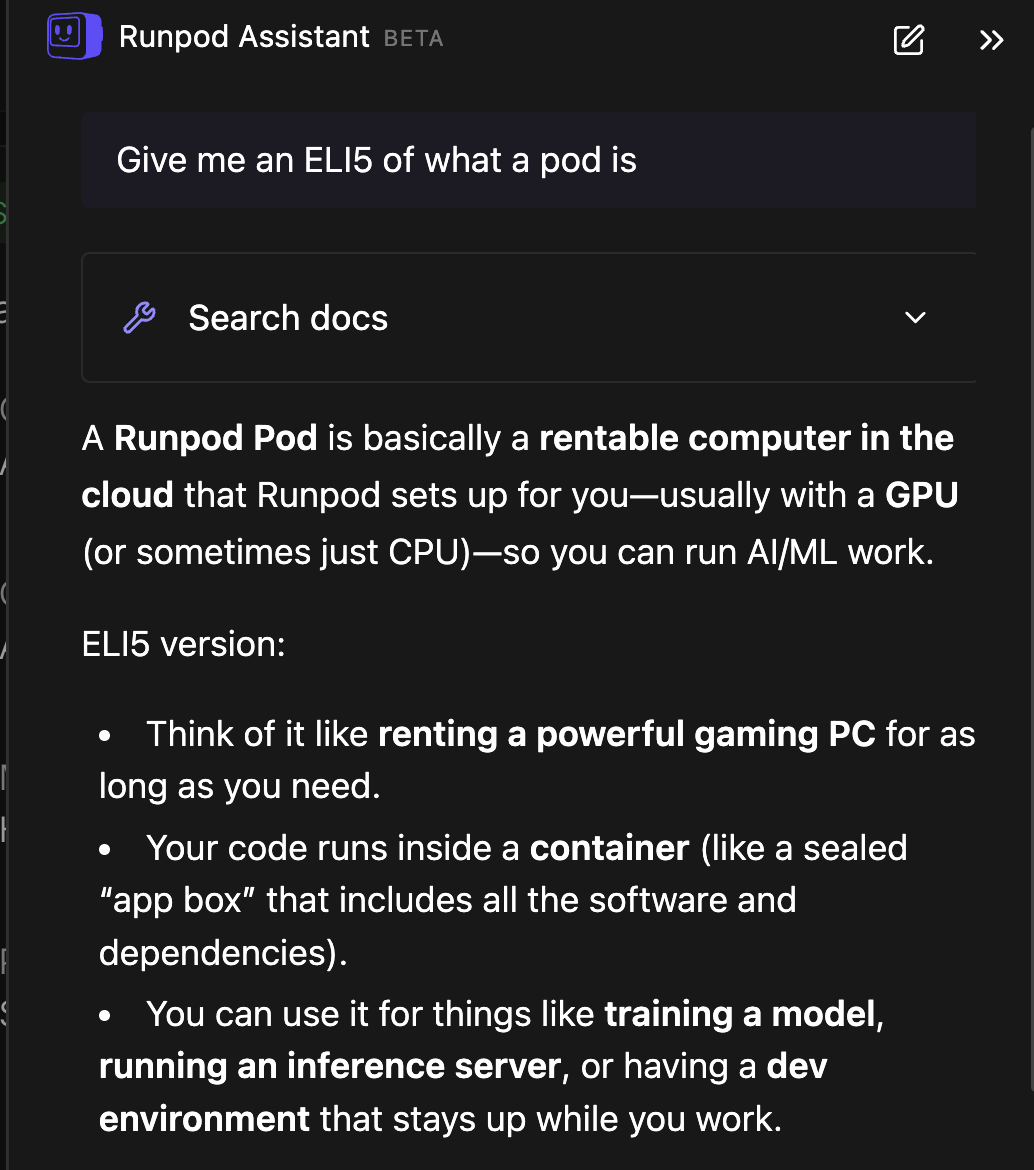

When you open the Assistant, it will suggest a few prompts to get started; things like "How do I use persistent storage?" or "Create a template." But you can ask it anything the way you'd ask any LLM. For example, asking for an ELI5 of what a Pod is will get you a clear, plain-language explanation.

You can ask the Assistant to start a specific Pod by ID, and it will look it up and start it for you. Because there's a cost involved, it will always ask you to approve the action before executing. The same goes for Serverless Endpoints: you can say something like "update active workers for my serverless endpoint to three," and it will find the endpoint, show you what it's about to do, and wait for your confirmation.

This is where things get really powerful. Instead of managing resources one at a time, you can issue broader commands like: "Stop all costs for Serverless Endpoints and Pods. Reduce active workers to zero and stop any running pods."

The Assistant will give you a summary of everything it's about to do, ask you to confirm, and then execute the operations across multiple Endpoints and Pods simultaneously. You're not limited to feeding it specific IDs. You can just say "stop all Pods" and it will figure out what's running, give you a status report, and let you shut everything down in one fell swoop.

The Assistant can help you think through GPU selection for your workload. Ask it something like "I want to run Llama 3.5 9B. What's the best GPU?" and it'll come back with a plan: such as an A40 as a solid all-around pick, a 4090 if you want the cheapest option that works (24 GB is enough), or an A100 if you want maximum throughput. It'll also give you quick rules of thumb, like 24 GB of VRAM for single-user workloads and 80 GB for high concurrency.

Once you've decided on a GPU, you can ask "Which data centers have high availability for A100 PCIe?" and get your answer immediately. This is actually much faster than doing it through the UI, where you'd need to go to the deploy screen and click through individual data centers one by one to check availability.

The Assistant and the API complement each other. For tasks that need specific and exact parameters like programmatic integrations, automated pipelines, precise configurations, the API is the better choice. But for more generalized queries, exploration, and quick management tasks, the Assistant has your back. Some things are just more naturally expressed in plain language than through API calls.

The Runpod Assistant is free to use and available now. Just click the chat icon in the upper right corner of your console to get started. We've only scratched the surface of what the Assistant can do, so experiment with it and see what works for your workflow.

Have ideas for useful prompts or features? We'd love to hear about them;drop by our Discord and let us know.

We've just released a new tool to get you help faster and streamline your account management.

.jpeg)

We're excited to announce the launch of the Runpod Assistant: a free, built-in chatbot that lets you manage your pods, endpoints, and infrastructure using plain English. Whether you need to spin up a pod, check GPU availability across data centers, or shut everything down at once, the Assistant puts those actions a conversation away.

The Runpod Assistant is a chatbot available at any time from the upper right corner of the Runpod console. It's knowledgeable about the Runpod ecosystem and AI more generally, and it offers functionality comparable to our REST API, but through natural language instead of code.

Think of it as a conversational interface to your Runpod account. You can ask it questions, give it commands, or use it as a sounding board when you're trying to figure out the right GPU for a workload.

Here are some things you can do with the Assistant right out of the box:

When you open the Assistant, it will suggest a few prompts to get started; things like "How do I use persistent storage?" or "Create a template." But you can ask it anything the way you'd ask any LLM. For example, asking for an ELI5 of what a Pod is will get you a clear, plain-language explanation.

You can ask the Assistant to start a specific Pod by ID, and it will look it up and start it for you. Because there's a cost involved, it will always ask you to approve the action before executing. The same goes for Serverless Endpoints: you can say something like "update active workers for my serverless endpoint to three," and it will find the endpoint, show you what it's about to do, and wait for your confirmation.

This is where things get really powerful. Instead of managing resources one at a time, you can issue broader commands like: "Stop all costs for Serverless Endpoints and Pods. Reduce active workers to zero and stop any running pods."

The Assistant will give you a summary of everything it's about to do, ask you to confirm, and then execute the operations across multiple Endpoints and Pods simultaneously. You're not limited to feeding it specific IDs. You can just say "stop all Pods" and it will figure out what's running, give you a status report, and let you shut everything down in one fell swoop.

The Assistant can help you think through GPU selection for your workload. Ask it something like "I want to run Llama 3.5 9B. What's the best GPU?" and it'll come back with a plan: such as an A40 as a solid all-around pick, a 4090 if you want the cheapest option that works (24 GB is enough), or an A100 if you want maximum throughput. It'll also give you quick rules of thumb, like 24 GB of VRAM for single-user workloads and 80 GB for high concurrency.

Once you've decided on a GPU, you can ask "Which data centers have high availability for A100 PCIe?" and get your answer immediately. This is actually much faster than doing it through the UI, where you'd need to go to the deploy screen and click through individual data centers one by one to check availability.

The Assistant and the API complement each other. For tasks that need specific and exact parameters like programmatic integrations, automated pipelines, precise configurations, the API is the better choice. But for more generalized queries, exploration, and quick management tasks, the Assistant has your back. Some things are just more naturally expressed in plain language than through API calls.

The Runpod Assistant is free to use and available now. Just click the chat icon in the upper right corner of your console to get started. We've only scratched the surface of what the Assistant can do, so experiment with it and see what works for your workflow.

Have ideas for useful prompts or features? We'd love to hear about them;drop by our Discord and let us know.

The most cost-effective platform for building, training, and scaling machine learning models—ready when you are.