Multi-node GPU clusters in the cloud. Deploy in minutes, not months.

Run multi-node GPU clusters in minutes with high-performance networking and no setup overhead, with flexible pricing for on-demand and reserved capacity.

Trusted by teams running production AI

Trusted by research teams and AI companies building at scale

Big training runs stall on infrastructure, not research. Getting access to 64 GPUs on a traditional cloud takes procurement cycles, capacity negotiations, and contracts that outlast the project. And when you finally get the hardware, the networking isn’t benchmarked, you’re the one debugging NCCL.

CLUSTER OPTIONS

Choose how you run your cluster

Start instantly or reserve dedicated capacity for long-term workloads.

Clusters

Multi-node compute ready in minutes, with no contract required to get started.

- Up to 64 H100/H200 GPUs

Available now, with more capacity by request. - InfiniBand + RoCE v2 networking

Near bare-metal NCCL performance, validated by SemiAnalysis. - Slurm pre-configured

Launch distributed workloads without building orchestration yourself. - Per-hour billing

No reservation required. - Deploy in minutes

Tear down anytime.

Reserved Clusters

Dedicated capacity with predictable pricing and long-term support for sustained workloads.

- 10,000+ GPUs reserved capacity

For larger training runs and sustained demand. - Single-tenant infrastructure

Isolated environments for teams that need supply certainty. - One-month minimum commitment

Built for workloads that need predictable access. - Committed pricing with volume discounts

Custom pricing for long-term planning. - SLA-backed uptime and dedicated support

Priority support for critical workloads.

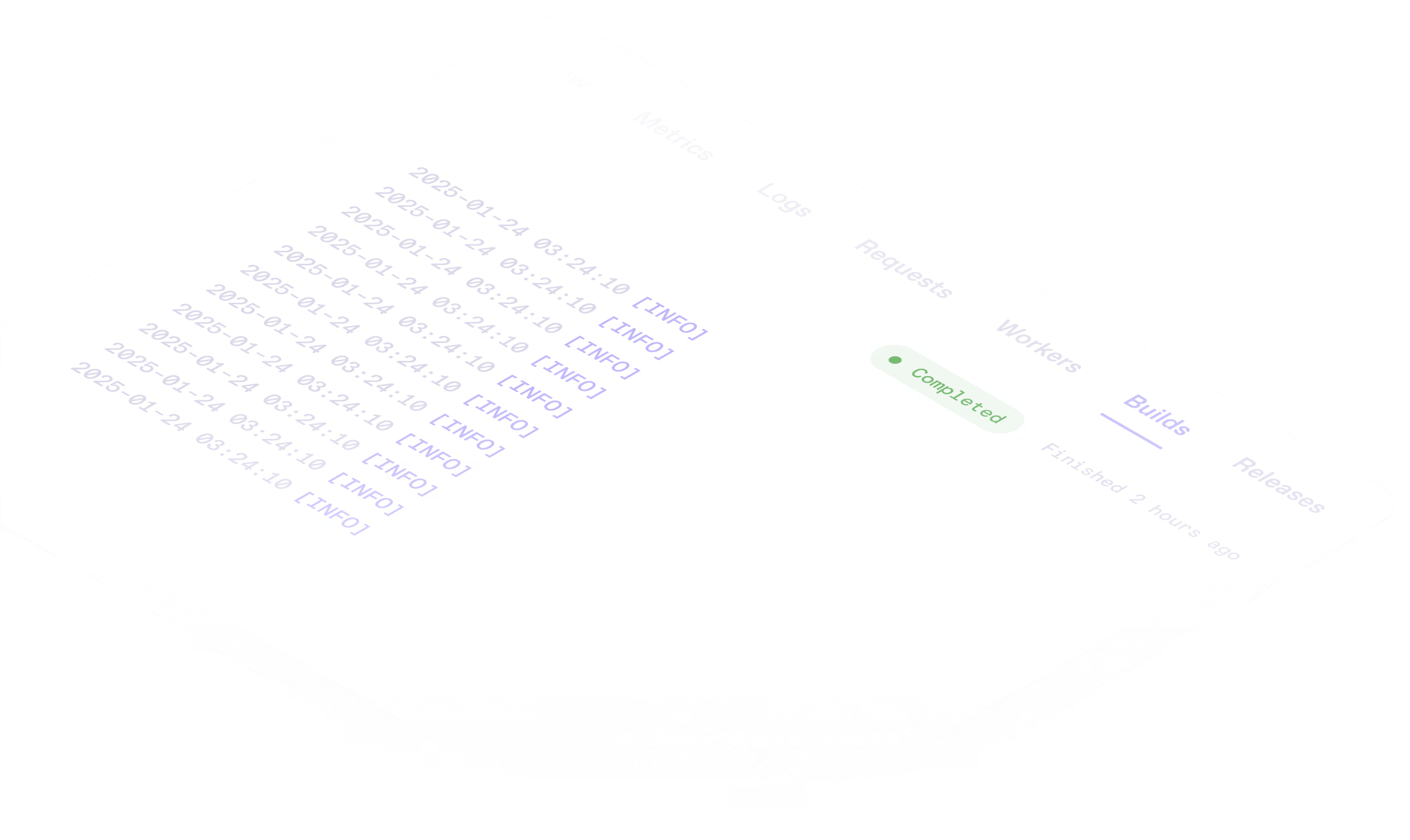

Operations and tooling built for distributed workloads

Tools and infrastructure designed for how teams actually run clusters.

Slurm-native orchestration

Run distributed workloads with built-in scheduling and resource management.

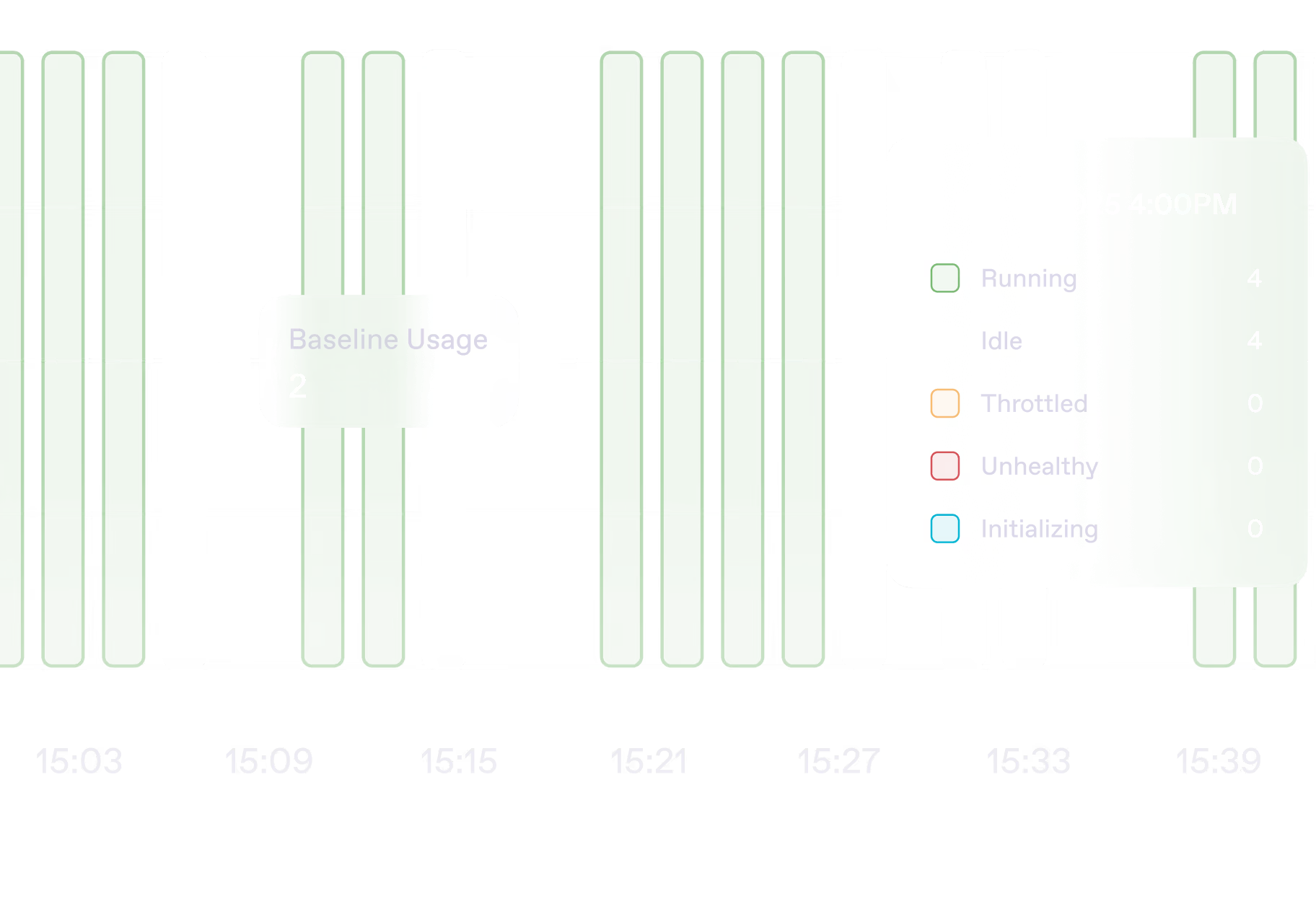

Dynamic node management

Add or scale nodes without rebuilding your cluster.

Shared storage volumes

Persistent storage accessible across nodes for large datasets and models.

Container-native workflows

Bring your own Docker images and manage your full software stack.

Built for the workloads that push beyond a single node

Runpod Clusters support distributed training, inference, research, and compute-heavy workloads that require more scale, coordination, and performance than a single machine can provide.

Foundation model training

Train large models across multi-node GPU clusters at scale.

Fine-tuning at scale

Fine-tune models on large datasets using on-demand or reserved clusters.

Distributed inference

Serve models across multiple nodes for high-throughput inference.

AI research

Run experiments, evaluations, and RL workloads on distributed compute.

Simulation and HPC

Run rendering, simulation, and multi-node compute workloads at scale.

Batch processing

Process large datasets and embeddings beyond single-node limits.

"Deep Cogito trained its 671B mixture-of-experts model on Runpod Clusters, demonstrating the scale possible with distributed infrastructure on demand."

Foundation model training

"The main value proposition for us was the flexibility Runpod offered. We were able to scale up effortlessly to meet the demand at launch."

Production inference scaling

"Runpod cluster networking delivered near bare-metal NCCL performance in third-party benchmarking."

Independent performance benchmarking

Operations and tooling built for distributed workloads

Tools and infrastructure designed for how teams actually run clusters.

SOC 2 Type II

Certified for security, availability, and confidentiality.

HIPAA compliance

HIPAA-compliant environments available for regulated workloads.

.webp)

Single-tenant infrastructure

Isolated environments for strict data governance and separation.

On-demand clusters available now

Spin up multi-node clusters with per-hour pricing and no long-term commitment.

Reserved capacity for sustained workloads

Secure dedicated infrastructure for larger training runs and predictable production demand.

Committed pricing with volume discounts

Reserved deployments include pricing structures designed for long-term capacity planning.

Built to scale from 64 to 10,000+ GPUs

Start with self-serve clusters or work with our team on dedicated single-tenant infrastructure.

What reserved capacity unlocks

Work directly with our team to design infrastructure, pricing, and support tailored to your production requirements.

Dedicated infrastructure

Single-tenant cluster infrastructure fully reserved for your workloads.

Predictable capacity

Secure a baseline GPU allocation with options to burst as demand increases.

Volume pricing

Access committed pricing and volume discounts for sustained workloads.

SLA-backed reliability

Uptime guarantees and contractual SLAs designed for production environments.

Dedicated support

Direct access to engineering support, escalation paths, and onboarding assistance.

Compliance & contracts

SOC 2, BAA, and DPA documentation, along with flexible contract structures.